- Принтеры и МФУ тонерные

- Принтеры и МФУ OKI

- Принтеры и МФУ Ricoh

- Расходные материалы

- Картриджи OKI

- Картриджи цветные OKI

- Картриджи для OKI MC853 / MC873

- Картриджи для OKI C301 /C321 /C331 /MC332 /MC342

- Картриджи для OKI MC860 / MC851 / MC861

- Картриджи для OKI C310/ C510/ C511 / MC352 / MC362 / MC562

- Картриджи для OKI C911 / C931

- Картриджи для OKI C810 / C830 / C801 / C821

- Картриджи для OKI MC760 / MC770 / MC780

- Картриджи для OKI C9600 /C9650/ C9800/ C9850/ C9655

- Картриджи для OKI C822 / C831 / C841

- Картриджи для OKI C5850 / C5950 / MC560

- Картриджи для OKI C5800 / C5900 / C5550mfp

- Картриджи для OKI C711 / C710

- Картриджи для OKI C610

- Картриджи для OKI C612

- Картриджи для OKI C532 / C542 / MC573

- Картриджи для OKI C332 / MC363

- Картриджи монохромные OKI

- Картриджи цветные OKI

- Картриджи Ricoh

- Картриджи Canon

- Картриджи Epson

- КАРТРИДЖИ Konica-Minolta Develop Copier

- КАРТРИДЖИ Kyocera-Mita Copier

- КАРТРИДЖИ Ricoh Copier

- КАРТРИДЖИ Samsung Laser

- КАРТРИДЖИ Kyocera Laser

- КАРТРИДЖИ Lexmark Laser

- КАРТРИДЖИ Panasonic Laser

- КАРТРИДЖИ Oki Laser совм.

- КАРТРИДЖИ Ricoh Laser совм

- КАРТРИДЖИ Xerox Laser Color

- КАРТРИДЖИ Xerox Laser Mono

- Картриджи OKI

- Заправка картриджей

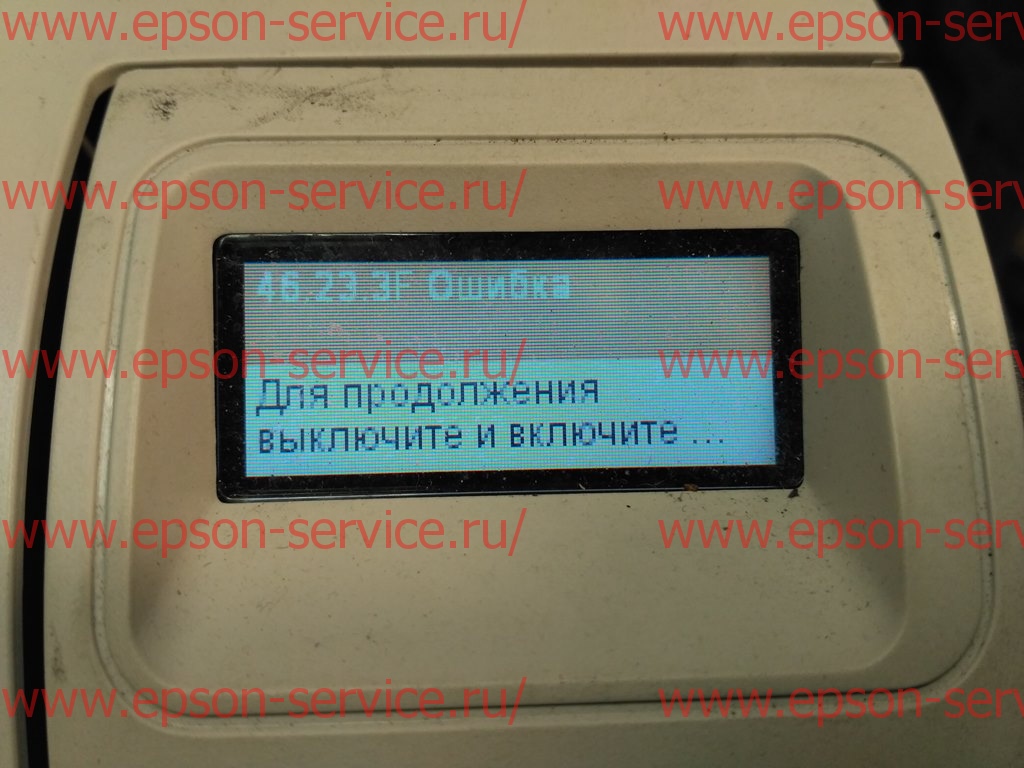

- Сброс счетчиков

- Принтеры и МФУ струйные

- Широкоформатное оборудование

Прием заявок

+7 (473) 258-26-24

+7 (910) 340-11-15 (экстренный)

info@epson-service.ru

режим работы

пн.-пт.: 9:00 - 18:00

0

Корзина

Корзина пуста